|

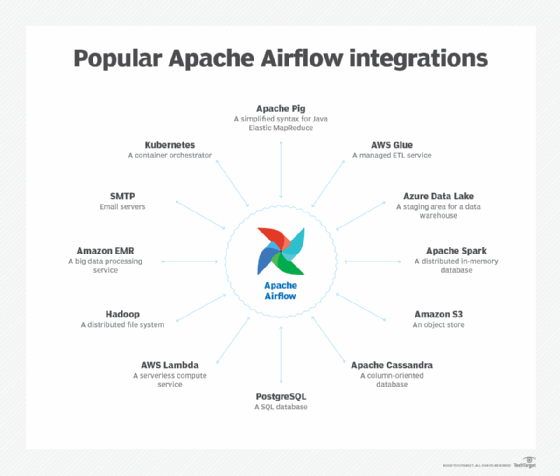

Developers can create operators for any source or destination.ĭAGs can be run either on a defined schedule (e.g. It runs tasks, which are sets of activities, via operators, which are templates for tasks. Then, Airflow will manage the scheduling and execution. Tasks and dependencies are defined in Python or external scripts. Provide a web interface for excellent visibility and management capabilitiesĪirflow uses directed acyclic graphs (DAGs) to manage workflow orchestration.Orchestrate third-party systems to execute tasks.Define, schedule, and monitor workflows.Generally speaking, you can use Apache Airflow to: These data pipelines deliver data sets that are ready for consumption either by business intelligence applications and data science, machine learning models that support big data applications. Orchestration of data pipelines refers to the sequencing, coordination, scheduling, and managing of complex data pipelines from diverse sources. What Is Apache Airflow Used For?Īpache Airflow is used for the scheduling and orchestration of data pipelines or workflows. Due to Airflow being distributed, scalable, and flexible, it’s suitable to handle the orchestration of complex business logic. It connects with multiple data sources and can send an alert via email or Slack when a task completes or fails. Airflow’s rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed. From the beginning, the project was open-source, becoming an Apache Incubator project in March 2016 and a Top-Level Apache Software Foundation project in January 2019Īirflow uses Directed Acyclic Graphs (DAGs) to manage the task workflow. Creating Airflow allowed Airbnb to programmatically author and schedule their workflows and monitor them via the built-in Airflow user interface. Starting at Airbnb in October 2014, Airflow was initially a solution to manage the company’s increasingly complex workflows. Apache Airflow, or simply Airflow, will ensure that the execution of each task of your data pipeline is in the correct order and gets the required resources. Using it, you can easily schedule and run your complex data pipelines. Getting StartedĪpache Airflow is an open-source workflow management platform to programmatically author, schedule, and monitor workflows. Moreover, it’s also important that the process of the tasks execute in order.Īpache Airflow is one such tool that can be very helpful for you to build and maintain your own workflow. Consequently, it would be great if the daily tasks just automatically trigger on defined time. It includes processes like collecting data from multiple databases, preprocessing it, uploading it, and reporting it. The Executor runs tasks and pushes them to workers.Most businesses have to deal with different workflows. DatabaseĪll the pertinent information is stored there (tasks, schedule periods, statistics from each sprint, etc.). It helps track the tasks’ status and progress and log data from remote depositaries. The web server plays the role of Apache Airflow user interface. It monitors all the DAGs, handles workflows, and submits jobs to Executor. Hooks should not possess vulnerable information to prevent data leakage. Hooks are third-party services that interact with external platforms (databases and API resources). S3Sensor checks the availability of the object by the key in the S3 bucket.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed